You have probably seen the same take over and over:

“Gemini 3 is crazy. I am not going back to ChatGPT.”

And honestly the hype is not totally wrong.

Google’s Gemini 3 Pro has rocketed straight to the top of most public leaderboards. It is beating OpenAI’s GPT-5.1 and Anthropic’s Claude Sonnet 4.5 across the majority of benchmarks released by Google, including math, multimodal reasoning and long-context tasks. On paper it is not just competitive: it is the new boss.

But there is a catch.

If you want to build serious products on Gemini 3 you are not just picking a model. You are effectively picking Google Cloud Platform as your home base.

This article is for curious builders who are excited about Gemini 3, but also wondering:

- What does “GCP lock-in” actually mean in practice?

- What do I gain versus lose if I go all in on Gemini 3?

- How does that compare to building on OpenAI (Azure/API) or Anthropic (AWS/Bedrock)?

Let’s unpack it.

Why Everyone is Losing Their Minds Over Gemini 3

Before we talk lock-in it is worth being clear about why people are so hyped.

1. It’s (currently) the benchmark king

Gemini 3 Pro is right now the front-runner on most public benchmark tables. It leads on multimodal reasoning tests such as MMMU-Pro (university-level questions across text plus images). It crushes complex reasoning puzzles like ARC-AGI-2. It hits top-tier scores on math and science benchmarks that used to be GPT-only territory. Community leaderboards such as LMArena/WebDev show it at or near the very top.

Multiple independent write-ups converge on the same narrative: Gemini 3 peaks higher than GPT-5.1 and Claude Sonnet 4.5 in raw reasoning and multimodal intelligence. Benchmarks are not the whole story but they are not nothing either.

2. Native multimodal plus absurdly large context

Gemini 3 is not a text model with multimodal bolted on. It is natively multimodal: text, images, audio, video, code, all in one context. It also supports a one-million token context window. That is enough to stuff in entire codebases, massive PDFs, long chat histories or multi-day agent traces without playing chunking tetris.

If your use case involves large documents or data rooms, full-repo reasoning for code assist or multi-step multi-modal agents this is a huge advantage.

3. Built for agents and “builder first” workflows

Gemini 3 is not just a chat model, it is clearly designed to power agents and developer workflows. Google’s new Antigravity IDE is literally an agent-first coding environment with Gemini 3 Pro at its center. Gemini 3 Pro is wired into Google AI Studio, Vertex AI and third-party tools like Cursor, GitHub and Replit. It’s tuned for agentic coding tool use and “vibe coding” generating entire apps, refactors and flows not just snippets.

A lot of developers testing it describe the experience as: “This is the first time an AI model feels like a real senior dev pair-programmer.” And then there is the reach: Google pushed Gemini 3 into Search, the Gemini app and enterprise tools almost immediately. That is billions of users getting touched by this model day one.

So yes: as a model Gemini 3 is absolutely not overhyped.

Now let’s talk about the price of admission.

The Catch: Gemini 3 Lives on GCP

This is the part that matters for architecture and long-term strategy.

To build with Gemini 3 at scale you are essentially going through Google’s stack:

- Gemini API/Google AI Studio for prototyping and light usage

- Vertex AI for production workloads and enterprise deployments

- Gemini Enterprise/Gemini for Workspace if you are doing deeper integration into Docs, Gmail, etc

There is no “download a model and run it on your own infrastructure”. There is no “we are on AWS but we’ll just plug Gemini into our VPC locally”.

Yes you can call the Gemini API from anywhere. But the compute, billing, limits and control are firmly inside Google’s world.

For builders this raises an important question: Are you okay tying a core part of your product’s brain to one cloud vendor and specifically to Google?

Let’s look at both the upsides and the trade-offs of leaning into GCP.

The Upside of Going All In on Gemini 3 + GCP

If you accept the GCP alignment you get some powerful benefits.

✅ You get the best model (for now)

If you are optimization first about raw capability Gemini 3 Pro is the obvious pick:

- Stronger reasoning than GPT-5.1 in most tests

- Better multimodal understanding than Claude Sonnet 4.5

- A context window no one else is matching yet

OpenAI and Anthropic are still fantastic, but Gemini 3 is the current frontier.

If your brand or product positioning includes “powered by the world’s most advanced AI” Gemini gives you a more defensible talking point, especially in categories like deep code assist, agentic workflows or multimodal analysis at scale.

✅ Tight integration with the Google universe

If you are already using Google Cloud (BigQuery, Cloud Storage etc) or Google Workspace (Docs, Sheets, Gmail), then Gemini 3 fits beautifully.

Examples of what that looks like in practice:

- Using Gemini 3 in Vertex AI to build agents grounded in BigQuery data

- Having Gemini 3 show up inside Docs, Gmail, Slides so your product workflows piggyback off those

- Letting Gemini Agents automate actions across Google’s ecosystem (Search, Drive, Calendar…) so your app doesn’t reinvent those tools

This is the classic “platform halo”: once you are in Google land a lot of things just talk to each other in ways that feel almost unfair compared to stitching everything yourself.

✅ Day-zero support for the open-source ecosystem

One underrated move: Google didn’t just ship Gemini 3; they shipped it with the ecosystem already wired up. On launch Gemini 3 Pro had integration with LangChain, LlamaIndex, Vercel AI SDK, n8n and other popular agent frameworks and gateways. That means you can plug Gemini 3 into existing LLM workflows with minimal friction.

If you’ve already built on “generic LLM infra” (LangChain, etc), you can often swap GPT-4/5 → Gemini 3 with one config change and a week or two of prompt tuning.

✅ Enterprise-ready from the start

Because it lives inside GCP, Gemini 3 benefits from all the usual enterprise goodies:

- IAM and org policies

- VPC, private networking, regional isolation

- Vertex AI’s monitoring evaluation and governance tools

- Enterprise support contracts

If you are building for big companies especially ones already on GCP this is a big deal. “We use Gemini 3 on Vertex AI” is a lot easier to get past a security review than “we’re hitting some random third-party API from our prod network.”

The Downside: What GCP Lock-In Really Costs You

Now the uncomfortable but necessary part.

⚠️ You’re betting your AI core on a single vendor

Using a closed hosted model always creates dependency. With Gemini 3 that dependency is amplified:

- The model only runs on Google infrastructure

- Pricing quotas and roadmap are entirely controlled by Google

- If Google decides to change terms, pricing or rate limits you eat that

Yes you can layer abstraction and use multiple providers, but in practice many teams that go deep on one model end up implicitly tied to its quirks and strengths.

If your entire product feels like Gemini 3 migrating away later will not be trivial.

⚠️ You may be juggling multi-cloud for real

If you’re already on AWS or Azure, choosing Gemini 3 effectively forces a multi-cloud architecture:

- Your app and data on AWS/Azure

- Your AI brain living in GCP

- Networking latency and compliance complexity sitting in between

Some teams are fine with this. Others don’t want to manage two sets of IAM identity cost and network/security policies. If you’re early stage and don’t have a DevOps team luscious in Terraform this can be real drag.

⚠️ Pricing: premium not cheap

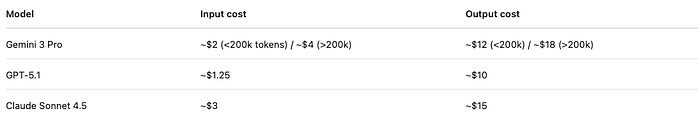

Rough published pricing for flagship models (per one-million tokens, ignoring volume discounts and promos):

There are nuances (prompt caching, enterprise tiers, etc.) but the broad picture is: GPT-5.1 is cheapest; Gemini sits in the middle; Claude is most expensive. If you build an AI-native app that chews through millions of tokens, that 2× difference vs OpenAI is not a rounding error.

⚠️ You have less control over the model itself

All three are closed models; but at least with OpenAI and Anthropic you have more flexibility:

- You can mix and match clouds

- You can choose between model sizes and tiers

- They blend more easily into your existing stack

With Gemini the pull is stronger: new features land in GCP first; extras like agent tooling image/video models are tightly tied to Google’s infra; usable tooling like search grounding is built around Google Search. You are not just picking a model; you’re increasingly adopting Google’s full-stack view of AI.

⚠️ It’s still early and preview rough edges are real

If you lurk on Reddit or dev blogs you’ll see the pattern: tons of praise for Gemini 3 Pro’s raw intelligence but also bug reports and complaints about early-release quirks: search tools misfiring, certain tool calls failing, weird rate limits. That is normal for a fresh model but if you’re banking your startup on it you want to account for operational maturity. OpenAI and Anthropic have had years of battle-testing in production.

The Alternatives: OpenAI and Anthropic Without the GCP Strings

If you decide “I don’t want my core AI tied to GCP” your main closed-model alternatives are:

- OpenAI (GPT-4 / GPT-5 / GPT-5.1) via OpenAI’s public API or Azure OpenAI for enterprise.

- Anthropic (Claude Sonnet 4.5 and the newly released Claude Opus 4.5) via Anthropic’s API, AWS Bedrock or even Google’s Vertex AI marketplace.

Let’s hit the highlights.

Option: OpenAI (GPT-5.1), Flexible and Cost-Efficient

For many builders OpenAI is still the default choice, and that’s not just inertia.

Why pick OpenAI:

- Cloud-neutral by default: call OpenAI API from anywhere.

- Azure OpenAI for enterprise: private networking, compliance, Microsoft backing.

- Great developer experience: mature docs, SDKs, community forums, example code.

- Excellent price/performance: nearly as smart as the frontier models in many tasks while costing less.

- Huge ecosystem: most third-party tools and no-code platforms support GPT models first.

In many “normal” SaaS use cases, chatbots, internal copilots, analytics assistants, developer tools, GPT-5.1 is more than good enough and likely the most efficient path.

Option: Anthropic (Claude Sonnet 4.5 / Opus 4.5) Long-Horizon and Safety-First

Anthropic is playing a slightly different game: they prioritize safety, reliability and extended coherence.

Why choose Claude:

- Excellent at long-running, autonomous workflows: there are real stories of Claude Sonnet 4.5 or Opus 4.5 running for 30+ hours of autonomous coding without losing coherence.

- Top tier coding model: Anthropic markets Opus 4.5 as best in class for coding and agents in late 2025.

- Multi-cloud availability: AWS Bedrock, Anthropic API, and even Google’s Vertex support give you platform flexibility.

- Safety-focused: designed to avoid hallucinations, reduce harmful outputs and provide stronger guardrails.

Downsides: generally higher cost per token; more conservative behaviour (which may slow rapid exploration); smaller community ecosystem (still growing). Claude is a great fit if you are building long-horizon AI agents, complex workflow systems or apps where “don’t mess up” matters more than “get it done fast”.

So… Which Way Should You Go?

Let’s boil it down into a decision framework for builders.

Go with Gemini 3 on GCP if:

- You want the strongest frontier model right now, especially for multimodal tasks, full-repo reasoning or agentic workflows.

- You’re already invested in Google Cloud Platform or comfortable building there.

- Your product can meaningfully exploit Gemini’s unique capabilities: one-million token context, tight Google integration, or complex agents.

- You’re okay with higher token costs in exchange for peak capability.

Go with OpenAI GPT-5.1 if:

- You want the best balance of performance, price and flexibility.

- Your infra is on AWS, Azure or elsewhere and you don’t want to adopt another major cloud just for the model.

- You care about ecosystem maturity, third-party tools, and predictable operations.

- You are dealing with many short or medium-length interactions: chatbots, copilots, customer support assistants, internal helpers.

- You’d like the option to pivot later without rewriting half your stack.

Go with Anthropic Claude Sonnet 4.5 or Opus 4.5 if:

- You’re building long-horizon agents, full-repo refactoring systems, research assistants or anything where “runs for hours” and “maintains consistency” matter.

- You are in a regulated domain or you need higher guardrails and stricter safety.

- You are on AWS or you want to go deep into Bedrock or multi-cloud strategy.

- You’re okay paying more for reliability and cautious reasoning.

And remember: you don’t have to pick just one model.

A very practical strategy is:

- Use OpenAI GPT-5.1 as the default workhorse: cheap, fast, good enough.

- Use Gemini 3 for “boss-fight” tasks: multimodal, agentic, full-repo reasoning.

- Use Claude selectively for safety-critical, long-running flows or heavy coding agents.

Architecturally that means: one internal LLM gateway service, config-driven model choice, A/B testing around cost and accuracy. Yes, it adds a little overhead. But it gives you leverage: you’re not locked into one provider’s roadmap or pricing fight.

Final Take

Gemini 3 is absolutely worth the excitement. If you only care about “what’s the smartest closed model I can hit with an API today?” then Gemini 3 Pro is probably your answer.

But shipping real products isn’t just about having the smartest model. It’s about:

- Total cost (token spend, engineering effort, ops)

- Platform risk (which vendor you depend on)

- Fit with your existing stack and users

If you’ve already committed to GCP and you can make the most of Gemini’s strengths, go for it. But if you value flexibility, lower cost or are building in a different cloud, OpenAI or Anthropic might be wiser.

In short: Gemini 3 is the genius you date if you’re okay moving into their house. OpenAI is the pragmatic partner who lets you keep your apartment. Anthropic is the careful long-term thinker you call when you need everything bullet-proof.

Pick the one whose trade-offs match your roadmap, not just today’s leaderboard screenshot.